Report? Or read on?

Sometimes things don't go as planned, like a new blog post I was writing last year, but the post got scrapped. I found something that I didn't expect and ethics won in that case, since I think it was the right thing to do.

It was May 15, 2019 and I was writing the blog post cURL for the OSINT Curious Project, when I stumbled upon something interesting: Flickr API's. I started writing and while doing a load of research with the help of the Flickr API developer documentation, I gathered it was actually really easy to:

- Get EXIF information (sometimes even when it was hidden for the web browser)

- See information about the photo

- Full profile/account info

- Public contact list

- Search users by nickname

- Search users by email

And the best thing of all was, they provided you already with an API key, so I didn't even need to make an account! But that isn't actually the reason why I'm writing this blog post, but a rather interesting discovery that made me send in a responsible disclosure and scrap the blog post itself.

⚠ No worries, this won't be a highly technical blog so keep on reading. ⚠ No, the issue found in this case was absolutely not the most severe one I've ever reported. But that isn't the point of this blog.

What is a Responsible Disclosure?

When finding a vulnerability of flaw in a product, whether that is a website, application or device, you have different options on how to communicate this discovery. Of course you can tweet about it or write a proof of concept and dump it on GitHub for the world to see. This is called a full disclosure and while there is an advantage of the company feeling pressured to fix the problem as soon as possible, it's usually regarded as a lesser option. On the other side we have a private disclosure model, where details are shared with the company and they will act at their discretion. It may be that they never fix it, or that they've written in their terms that researchers aren't allowed to share their findings with the world. While it may be ethically a better option, it isn't really perfect.

And that is where the third option comes in, the 'responsible disclosure' also called 'coordinated disclosure'. In this a researcher sends the findings to a company directly or via a third party, and they agree that after fixing the issue details can be published and shared with the world. Bug bounty platforms coordinate this for the researchers, with the benefit of working as a 'proxy' between the companies and researchers, while a direct approach has other advantages. It's custom for a direct approach to agree on a timeframe that the fix is being developed and rolled out, so the company has a reasonable amount of time before the findings are published. With bug bounty platforms, it's not unusual for companies to take their time fixing the issue, while the researcher is waiting for approval to publish their findings, although there usually are some guidelines about that.

And such was the case here. I probably could have went public after 180 days, according to the rules, but I decided to be patient and wait it out. But last month there finally was some news: The issue had been fixed and it was time for me to start writing this post. By the way, if you are interested in how the Dutch people handle responsible disclosure, and learn about the legal aspects of this for hackers and researchers, I strongly recommend you read the ebook Helpful Hackers. It can be downloaded for free from the author's website, or if you want to support the author, do buy a copy at your local Amazon.

How it Started

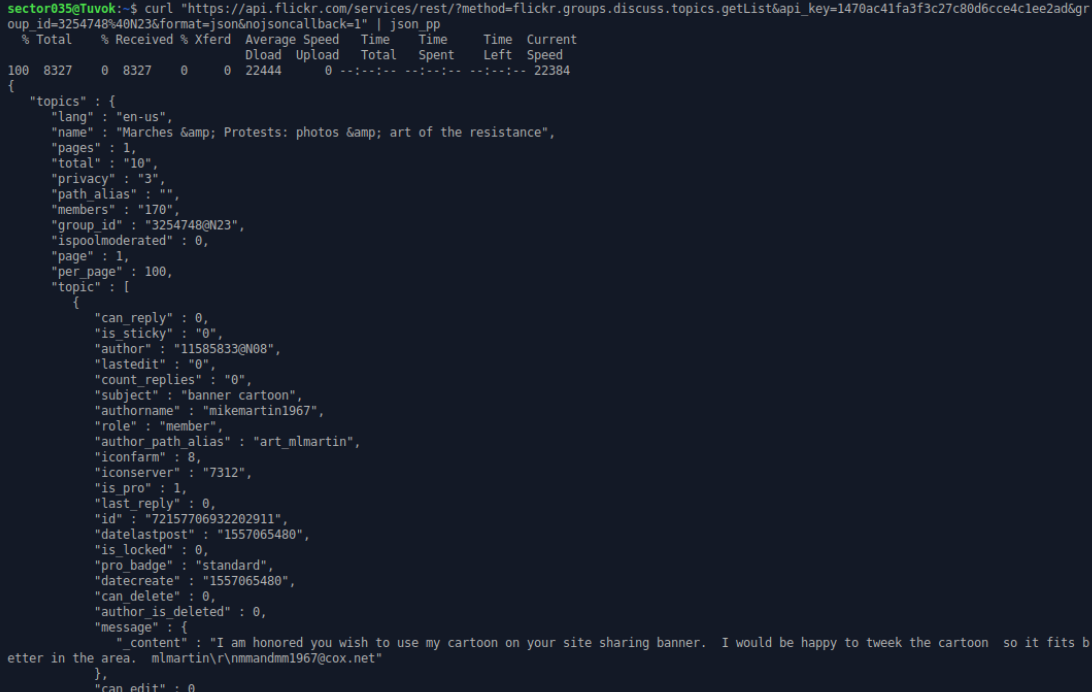

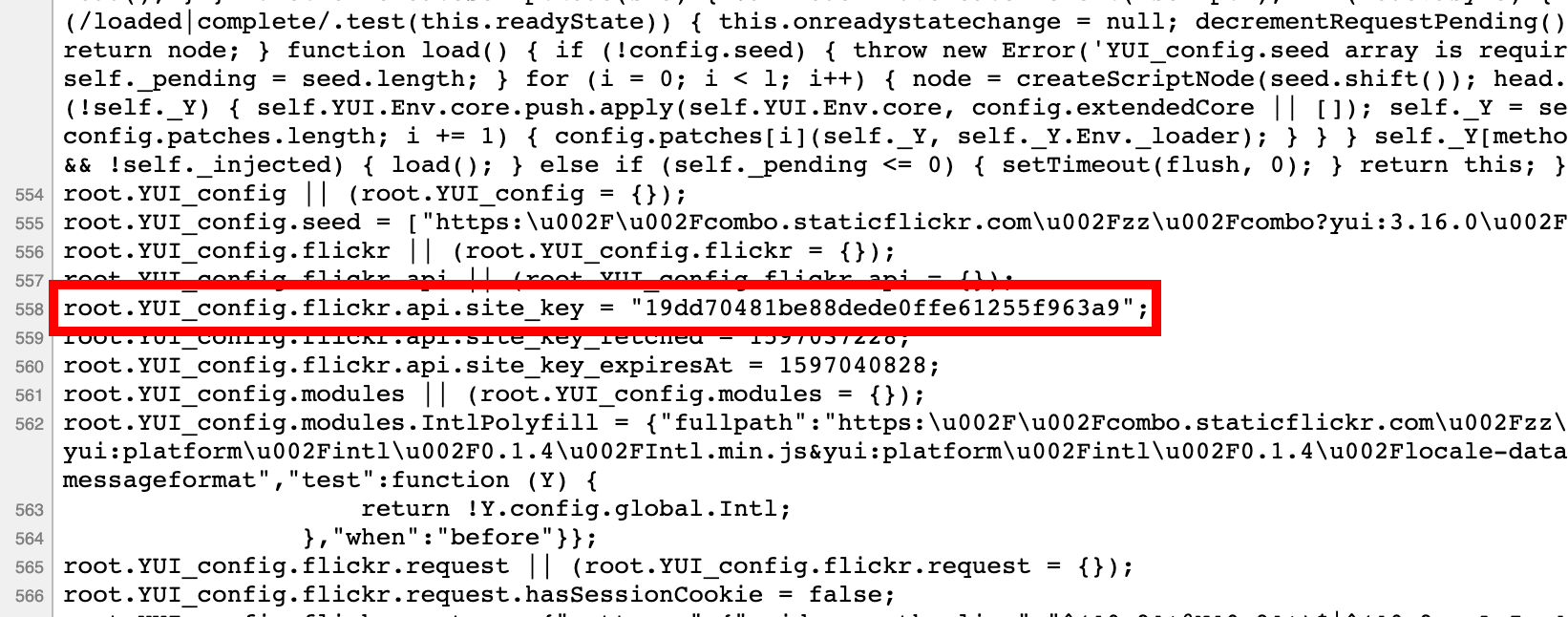

As said above, it was May 2019 and I was busy writing a blog post on API's and cURL when I was browsing around for some websites to use in the examples. I found the Flickr developers guide and to be honest, I actually recommend to read those back to front when you are looking for interesting things. In between reading about different endpoints, I scrolled through the source code of their website and found an actual API key that was used by their web frontend. So curious as I was, I added that API key into a test and I actually managed to retrieve information! That meant I didn't have to apply for some kind of API access, and didn't even needed an account in the first place!

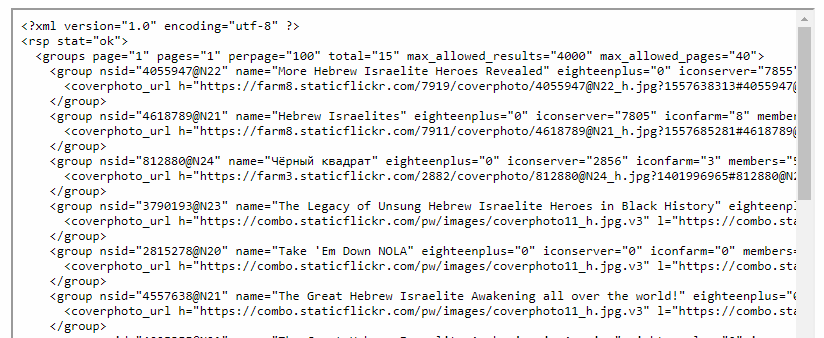

The second thing I did was trying out what I was able to do with this API key. It was clear to me that Flickr used their own API to retrieve information from the back-end to serve content to users that aren't logged in themselves. And after some testing, I found the section about groups. Within Flickr you are able to create groups, where you can post photos but also have a discussion forum. Usually these groups are all about photography, techniques, locations and such. But of course, there are lots of other groups out there. From antifa to environmentalists, and from crochet patterns to groups focussing on Israel.

And then, I stumbled upon something interesting: Groups that were partially closed for members only...

Improper Access Control

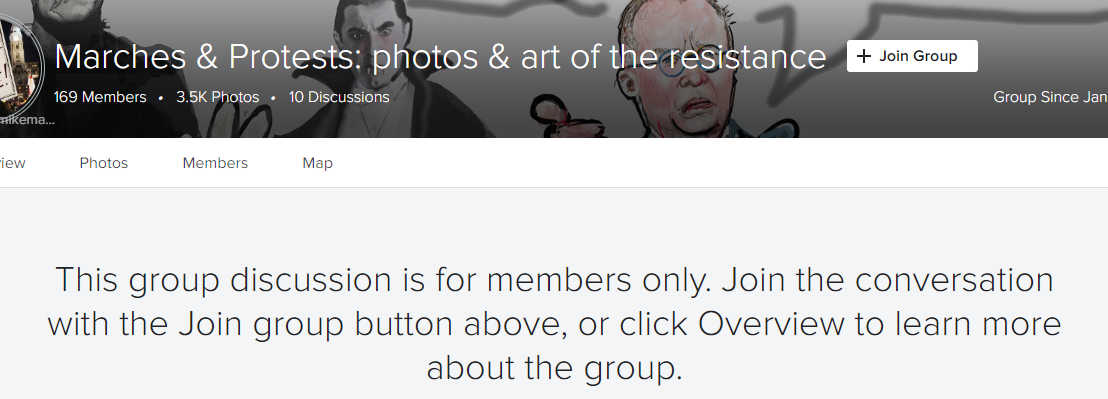

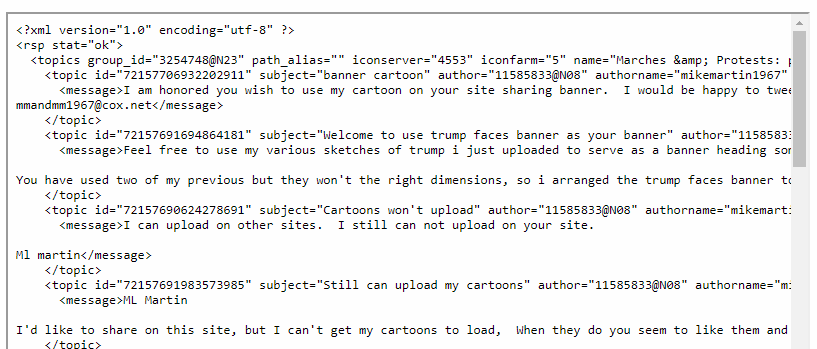

Flickr has the option to create a discussion forum where you can talk about your area of interest. The group itself can be public or private, where the private group can't be found or isn't shown in a search. And the sort of group membership has two options too: Open for all, or invitation only. Further options and settings can be set by the administrator of the group, giving some more options on what to show and what to hide. And at that moment I stumbled upon the group called "Marches & Protests: photos & art of the resistance". But there was something interesting, because they had their discussion forum locked down for members only.

With the developer guide open I started poking around a bit and I realised that the developers at Flickr made a mistake regarding access control. Because their own web frontend API key actually had access to this closed off forum, and showed me the contents of this supposedly member-only forum. And that is when I started thinking: Do I pursue this as an opportunity to find some juicy information, that should not have been open to the public? Or do I act ethically and send Flickr a report about this finding?

I sometimes add links to things like breached data or search engines for misconfigured S3 buckets or databases in my newsletter, and this was something similar, but yet slightly different. On the one hand there is data that was leaked or hacked and is out there for grabs for everyone already, or developers that completely ignore warnings and set-up guides when they open up their AWS bucket for the world to see. On the other hand we have developers that missed something small but crucial in the I-don't-know how many million of lines of code to secure one particular thing. So I chose to head over to HackerOne to file a repot on this. And even though there were many Flickr bugs found by lots of researchers, nobody seemed to spot this one. And that is also when I decided to stop any research I was doing for the particular blog post. The whole chapter on Flickr got scrapped, and even a private blog post about all the API endpoints that I tested with the public API key for genuine OSINT purposes was binned. And when Aware Online published a blog item about Flickr, I was still patiently waiting for Flickr to fix the issue, which took them well over a year. Until I finally received word they fixed the issue some time in July, so it was time to write a little article about this.

Not just Googling

There are still people who think that OSINT, or digital research, online investigation or whatever you want to call it, is just some fancy Googling and using some websites to find someone. I, on the other hand, love to dive into websites, figure out (mobile) applications, intercept network traffic or go over source code and developer guides. Just to find out how I can help other people make new or better tools, to help them find new ways to gather information or to bypass restrictions that were put in place to stop exactly that. It's sort of a friendly cat and mouse game between researchers and developers. And the bug described here wasn't rocket science and could easily be found by a lot of people, but that wasn't the point I tried to make.

Sometimes it's possible that finding something clashes with the ethical side of life. The line between those two worlds is very thin and not always clear, not even to me sometimes. I try to give information to the community, while still keeping an ethical state of mind while doing so. And that brings along the spare moments where I get into situations where there is a choice to be made:

Report? Or read on?