Week in OSINT #2023-24

Another WiO is here, with a little side-step into the world of security assessment, or hacking, and some other OSINT-y goodness!

This week I touch on a conference in Paris I would love to attend some day, but this year it wasn't meant to be. Besides that, I've got some Burp Suite and Bellingcat, and other interesting topics to cover:

- leHACK 2023

- Burping Genymotion

- Bellingcat OSM Search

- Emotional Intelligence

- PodText AI

Tip: leHACK 2023

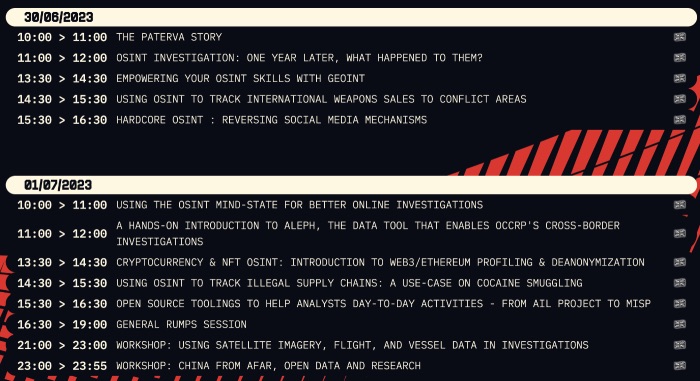

On Friday, June 30 one of the largest, or maybe even the largest hacking conferences in Paris starts: leHACK. Don't think this is just about hacking, since that is only one part of this conference. Just like other recent conferences, there is a full OSINT track within the "OSINT village". Two days of open source goodness, with great talks from speakers from all over the world. Do check out the line-up, and get your ticket while you still can. Thanks for the heads-up Sylvain!

Link: https://lehack.org/

Media: Burping Genymotion

Jake Creps has been sharing some interesting findings using the developer toolbar lately, and recently shared a video over on Twitter, where Cameron Cartier from Black Hills talks about capturing network traffic from an emulated Android phone. Using tools like Burp Suite is the best way to inspect network traffic, and this can absolutely be used to find even more interesting opportunities. This video might not be for everyone, but it does highlight how the knowledge of security researchers can help OSINT practitioners finding new ways to gather interesting information.

Link: https://www.youtube.com/watch?v=aqqdy7460yo

Tool: Bellingcat OSM Search

Bellingcat's extremely useful OSM Search tool has been posted to GitHub! So if you have the need to run a personal version of this tool, then you can do so yourself. This is one of the tools that Bellingcat has been publishing, and there are more tools coming out from their 'hackathons'. There are some amazing tools coming out lately, that can help investigators and I urge you to keep tracking the results of these hackathons.

GitHub: https://github.com/bellingcat/osm-search

Hackathon: https://www.bellingcat.com/resources/...

Article: Emotional Intelligence

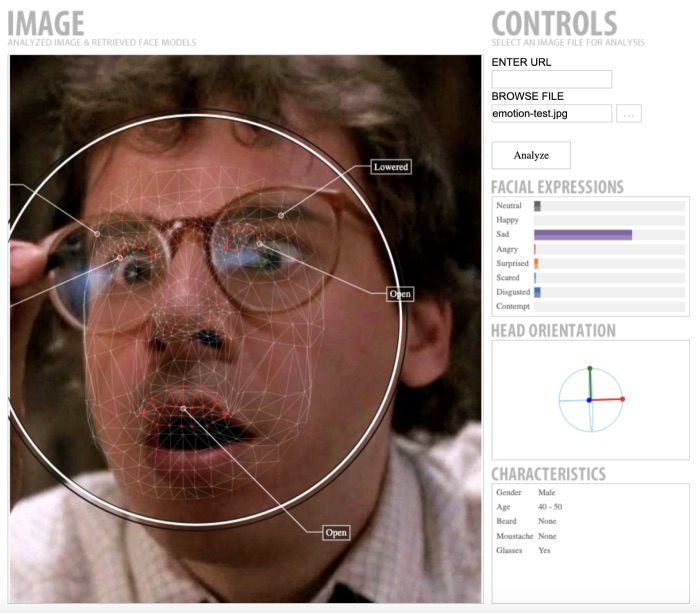

Twitter user @rezaduty notified me of an article about tools enabling someone to detect or interpret emotions or moods. It lists multiple websites that can be used to automatically determine facial expressions or a 'mood'. The article contains several links to image, video and text analysis tools and explains how to use them. It also gives a prompt on how you can leverage ChatGPT to read a 'mood' in a text. Do check the list of warnings at the end though. Because automated detection of emotions is far from perfect, since some tools only accept photos with only a face, like Noldus. It is also basing the outcome solely on the non-verbal part, of what is a complex social interaction.But also different cultures have different perceptions, rules or even meanings to something like a smile. And besides that, it is far from perfect, and not always correct, as can be seen underneath.

Link: https://hadess.io/emotional-intelligence

Site: PodText AI

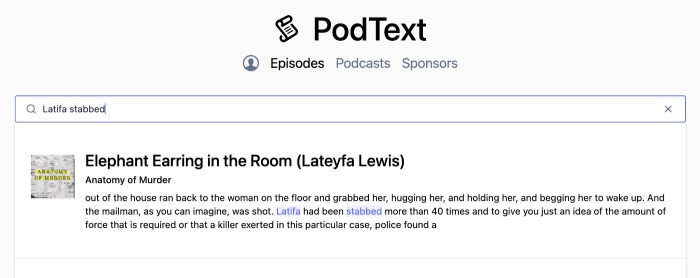

The website PodText has been indexing certain podcasts, and they used AI to transcribe them. They are not fully up-to-date with all the shows they index, but when searching for some info on one of my favourite podcasts, it was found straight away. The best part it, that by simply highlighting a part of a transcription, the show will be played from that point on. Amazing project, and could be helpful if you are looking for more context or information on a particular subject.

Link: https://podtext.ai

FUNINT: This Week's Meme

I can type pretty fast and can easily keep up taking notes during meetings, but the self-confidence gained during those moments, aren't enough when I try to apply them to transcribe things when it really matters!

Have a good week and have a good search!