Week in OSINT #2021-09

Your weekly OSINT newsletter is back with information from week 9. Well, some things were shared last week, the rest was found by me while browsing around looking for new tools!

From the dark web, to legal guidelines on collecting evidence. Anything can show up in this newsletter, and so it should. Because the field of OSINT isn't just browsing Facebook, or finding out where a photo was taken. OSINT can be applied to any kind of field where investigation is needed. To illustrate that, I have the following things for you:

- Dark Web Investigations

- Dark Web Guidelines

- Google Bookmarklets

- Minet

- Redefining OSINT on Cybercrime

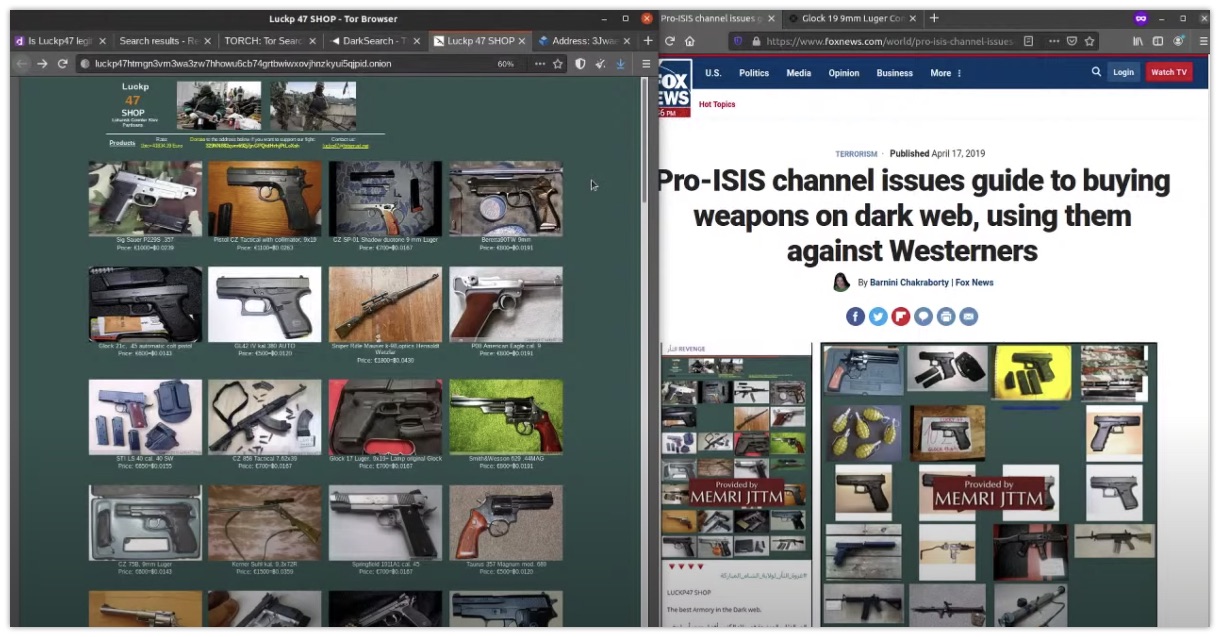

Media: Dark Web Investigations

Visiting sites on the dark web is considered a scary practice by some people, while it isn't so much different than browsing the internet. But finding sites and searching for content is something slightly different. Sinwindie did a live stream some time ago where he took on a specific subject and showed how he investigates the Tor platform. He shows his workings, and shares some valuable resources for it.

Link: https://youtu.be/YvV947T-F90

Article: Dark Web Guidelines

Handy guidance from US DOJ for those of you doing #OSINT research or interacting in darkweb or criminal forums: https://t.co/gsRp0TrrNW

— 𝚝𝚑𝚎 𝚐𝚞𝚖𝚜𝚑𝚘𝚘 (@thegumshoo) March 3, 2020

This document comes from the US DoJ and it has some really good guidelines and tips on how to perform investigations on the dark web by private parties, like cybersecurity experts or investigators. Everything from legal perspectives, best practices, how to gather information and several examples, everything seems to be neatly covered. If you plan to investigate something on Tor, I2P or on any kind of shady forum, I'd suggest you read this, in case you may want to share your findings with the DOJ.

Link (direct link to PDF!): https://www.justice.gov/criminal-ccips/page/file/1252341/download

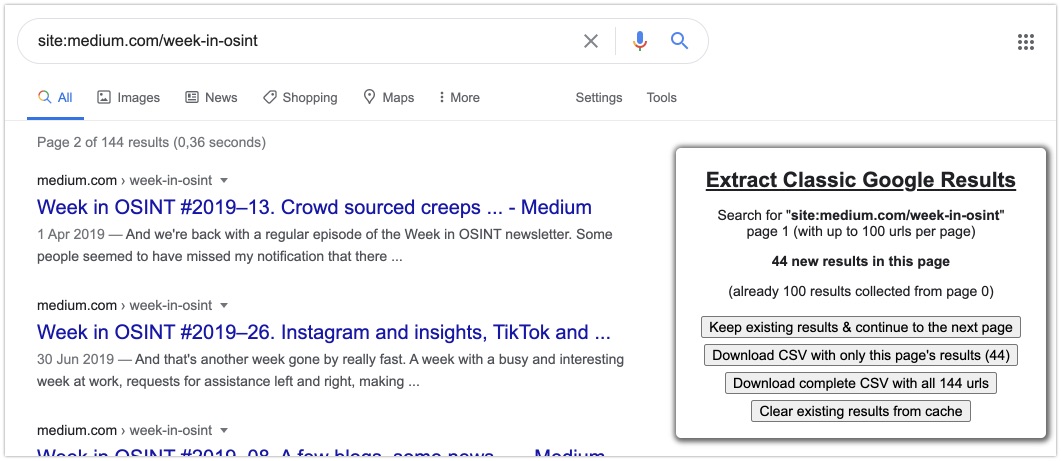

Tool: Google Bookmarklets

Via MediaLab, one of the creators of Gephi, I found some handy scripts and tools. One of them is a set of bookmarklets that enable you to extract Google links. The first bookmarklet enables you to quickly switch to a Google page in any language, and enables you to extend your results up to 100 results without going through your settings. The second bookmarklet enables caching of the found links, and gives you the option to extract hundreds of found links in one single click. Even though these bookmarklets are quite old already, they still work like a charm!

Link: https://medialab.github.io/google-bookmarklets/

GitHub: https://github.com/medialab/google-bookmarklets

Tool: Minet

Minet is another tool from MediaLab and initially I had some issues installing it. But after I found out I had to update my pip to the latest version, things were solved right away, so I could run a small test. This tool enables you to very quickly download a huge set of URL's and save them offline as single page HTML files. I must say, it worked like a charm, besides some issues regarding the layout of my own website that is. But Minet isn't only about dumping URL's in a single HTML file, it can do a lot more like extracting raw text, collecting data from CrowdTangle, scraping tweets or YouTube comments and so on. After running it, it also creates a nice logfile that gives you the URL, the status, the UUID and filename and encoding, like this:

index,url,resolved,status,error,filename,encoding

0,https://sector035.nl,,200,,f692e664-b947-4ab2-b063-8cb6003ae818.html,utf-8

1,https://osintcurio.us,,200,,a7c63094-d4dd-485d-9951-6983c65357c8.html,utf-8If you are familiar with Python, and aren't afraid to test out some tools, I do recommend playing with Minet. And if you aren't familiar with it, there are even compiled binaries that you can find here.

Link: https://github.com/medialab/minet/

Article: Redefining OSINT on Cybercrime

This article is written by Julie Clegg about challenges that exist in the investigative work we all do. From technological advancement and the challenge of moving data and information securely, to a standard way of preserving evidence. Maybe it is a good idea to have a good look at the traditional OSINT processes in your organisation. Are there specific topics that should adapt, according to the ever-changing technical landscape? What do my readers think?

Link: https://eforensicsmag.com/redefining-osint-to-win-the-cybercrime-war-by-julie-clegg/

Have a good week and have a good search!